Before you mail us your Christmas cards this year, shoot me a text or an email and I will send you our new address. We moved to Atlanta this spring. It was somewhat out of the blue, but in hindsight it was probably for the best.

Before you mail us your Christmas cards this year, shoot me a text or an email and I will send you our new address. We moved to Atlanta this spring. It was somewhat out of the blue, but in hindsight it was probably for the best.

In 2015 we realized that we needed to move out of our current house because it was two levels and I was having a harder and harder time with the stairs. We sat down realizing that moving would only become more difficult for us as I progressed, so we tried to figure out where we wanted to be long-term. We decided we were very happy with our lives in South Carolina, so we found a house in Columbia, totally renovated it, made sure it was completely accessible, and made it our home. As things typically play out, then about a week after moving in, the company Cara worked for was bought. Over the next year her job got crazier and crazier and it was apparent that she needed to get out. Unfortunately, she was working at the only place in town that really did what she wanted to do. So we reevaluated our choices, she began looking for jobs in cities that we would consider moving to, and now we are in Atlanta.

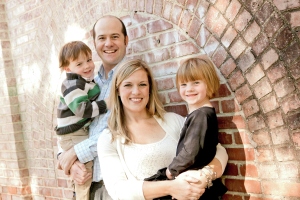

Timing wise, the move wasn’t so bad. Mary Adair got to finish up first grade at a new school, and wasn’t yet too tied to her old one. James will start kindergarten in the fall, so he was already going to be at a different school from a bunch of his buddies. It meant that after a disappointing 4K year, he got a couple of months of Papa camp to get ready for the fall. I was already scheduled to go out on disability from the University of South Carolina. So other than leaving a bunch of really good friends, a routine we were super comfortable with, a city and a state that felt like home, and a house we had put a lot of effort into, it wasn’t such a big deal. But moving is never fun.

Timing wise, the move wasn’t so bad. Mary Adair got to finish up first grade at a new school, and wasn’t yet too tied to her old one. James will start kindergarten in the fall, so he was already going to be at a different school from a bunch of his buddies. It meant that after a disappointing 4K year, he got a couple of months of Papa camp to get ready for the fall. I was already scheduled to go out on disability from the University of South Carolina. So other than leaving a bunch of really good friends, a routine we were super comfortable with, a city and a state that felt like home, and a house we had put a lot of effort into, it wasn’t such a big deal. But moving is never fun.

Mentally the transition was sort of complicated for me. It was hard to separate what the move would do to me versus what my progression would have done to me no matter where we lived. It was convenient to put on the calendar that once we moved to Atlanta, I would no longer drive. I had been saying I would drive for two more months for about three years, but the time had come. Mentally though it was easy to blame moving to Atlanta for not being able to drive. I was already scheduled to leave my job and stop going into my office, but the dropdead date was determined by the move. I am typically able to be a somewhat rational person, so whenever I focused on this, I tried to stop myself and realize these changes were coming no matter what. But it wasn’t always easy.

There was this weird abrupt transition. When Cara started her job in Atlanta, me and the kids were still in Columbia while some work was done on our new house. One day, the three of us woke alone together in the old house. I drove us to Dunkin’ Donuts. We had breakfast. I drove them to school. I went to my office. I drove and picked them up. The three of us drove to the run down hibachi restaurant around the corner from our house. And then we called it the night. The next day, we moved and in Atlanta I didn’t drive.

There was this weird abrupt transition. When Cara started her job in Atlanta, me and the kids were still in Columbia while some work was done on our new house. One day, the three of us woke alone together in the old house. I drove us to Dunkin’ Donuts. We had breakfast. I drove them to school. I went to my office. I drove and picked them up. The three of us drove to the run down hibachi restaurant around the corner from our house. And then we called it the night. The next day, we moved and in Atlanta I didn’t drive.

There were a million things to do around the house, but I couldn’t really do them. Things that I did 18 months before in our last move, were now out of the question. Now they all fall on Cara. My job was to meet with the handyman and a handful of contractors coming in and out of the house, but even that got taxing. I got tired and didn’t notice a rug was lifted up a little bit, and I tripped, my head bouncing off the hardwood floor like a billiard ball that jumped off the table. I tried to take a shower in the bathroom we had just torn up to make accessible, and I slipped getting out. Hell, I read too many books in a row on my phone and all the swiping caused my left thumb to twitch for four days straight, leaving me dependent on my right middle finger for placing the amazon orders and operating the remote. Going to new places is complicated and when you move everything is new. What is the entrance like? How crowded will it be? Do I need the scooter even if we are only going to be there for five minutes? Will there be any steps at all, making it a no go?

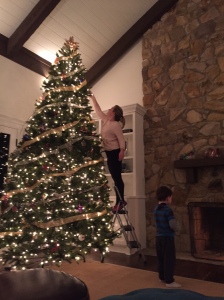

After living with this disease for four years, I am used to making adjustments. It just was a lot all at once. But after a couple of months, it is starting to feel like home again. I am used to the new house and intuitively know where the rugs are and where the furniture is. If the kids pick up their toys (if), I can comfortably walk around. I found a spot on the new couch where I can wedge myself down far enough so I can rest my neck. We figured out a solution to help me get out of the pool, so I still have that activity with the kids. We tested out Marta, and if someone drops us off at the station, the kids and I can get ourselves to the aquarium and have adventures. I “walked” them to camp up the street this morning. After going to two Atlanta United matches (Thanks Jonathan and Arod!) All four of us are totally hooked. Mary Adair takes it almost as seriously as her papa. Almost. Once they move into their new stadium, we have ADA season tickets lined up. Mary Adair got Sorry and Trouble for her birthday and we have been having fun battling it out. Card games don’t really work for me, but if one of them pops the bubble in Trouble, or flips the cards in Sorry, we are good to go. We hired an assistant who helps me shower, get dressed, and run errands a couple of days a week. I had my first appointment at the Emory ALS clinic and am excited to be close to the therapists. Charlotte was great, but it is harder to have follow-up appointments when it is 90 minutes away. The occupational therapist was eager to have me come into the office to show me all the gadgets they have for interacting with my phone or computer as my left thumb gives out for good. I went back and had a two hour visit with the physical therapist testing out all of the different wheelchairs and putting in my order.

And most importantly the big things have gone smoothly. After her very first day at her new school, Mary Adair got off the bus beaming and every morning jumped out of bed at 6:20 ready to head off. James and I had a great couple of months of Papa camp, powering through workbooks, reading stories, and exploring the neighborhood. He has met a bunch of kids who will be rising kindergartners with him in the fall. Cara likes her new job. Her mom moved down here and is a huge help keeping the trains running on time.

Moving is always awful and with ALS is even more so. Adjustments are hard, and a bunch all at once are harder. But other than missing some really good folks in South Carolina (and that banged up hibachi place), I think we have gotten it right.